Your finance team is talking about AI, but are they actually building with it?

SupERPower Hour is a free, live series for modern finance teams, covering prompting, vibe coding, app building, and AI agents. We build everything live using free tools. No technical background required.

See where your team stands. Join this Friday.

Let’s do an experiment…

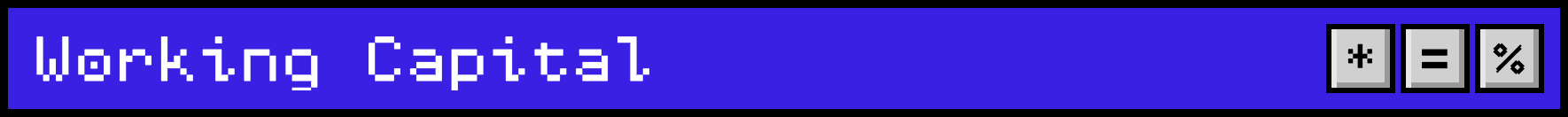

Open a completely fresh conversation thread in your AI model of choice. Then copy and paste this prompt exactly:

“(999,999,937 x 888,888,883) - (777,777,779 x 666,666,667) + (555,555,557 x 444,444,443) - (333,333,331 x 222,222,223)”

Does it deliver an answer? Does it show its method? If not, ask it to ‘show working’?

When I did this (I used ChatGPT), here was the response:

Don’t worry about the details of the math… it’s not important (please don’t email me).

But what’s interesting is that ChatGPT turned the whole thing into an algebraic problem and solved it that way. I tried it two or three times. Each time it took a slightly different algebraic approach, but always through this algebraic, stepwise logic and reasoning. (It still got the right answer each time - I suspect a year ago it would not have.)

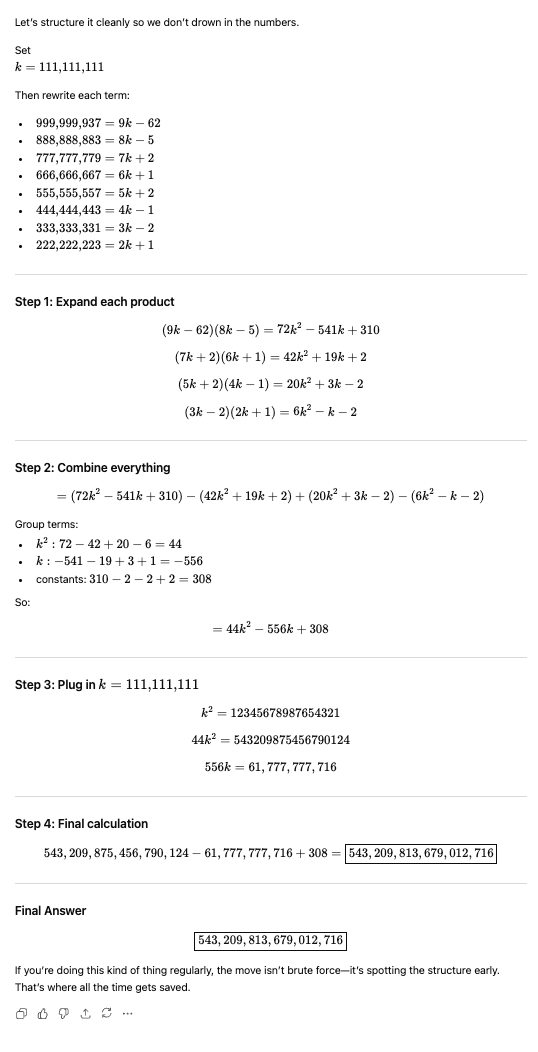

Now for Part 2 of our experiment. Open a fresh thread and give it exactly the same prompt, but this time, put the word "calculate" in front of it.

Here is the response I got:

Just one word, and a completely different response.

Instead of reasoning through the problem, ChatGPT reached into its toolkit and opened its native calculator (think iPhone calculator, but inside the model). It computed the answer directly and came back with a one-liner explaining why it passes the smell test.

I find it crazy how one word drove two completely different approaches to solving the problem.

Which response gave you more confidence? I know my answer…

The first approach (reasoning from first principles) is AI doing what AI does in its purest form: pattern completion and probabilistic thinking. Impressive, but it introduces unnecessary vulnerability when a more deterministic tool exists.

The second approach (reaching for the calculator) is more like AI-powered automation. The model is smart enough to know it shouldn't reason through a simple math problem when a calculator is sitting right there. It delegates to the right tool, and the answer is guaranteed, not probable.

For a simple linear calculation like this, the distinction barely matters. But in a finance context, it matters enormously. You don't want AI reasoning through a covenant calculation from first principles when what you need it to do is follow a precise formula.

So, getting comfortable with AI at scale in your finance team is mostly about making sure you know when to use AI, and when not to.

Welcome to part 2 of this 4-week series: The No BS Guide to AI for CFOs.

Last week, we made the case that it's time for CFOs to move beyond individual productivity experiments with AI and figure out how to unleash its power at scale across the whole finance function.

This week, we get into the fundamentals of how AI works and what it means for deploying at scale into your team.

Note - this is a long one. Make sure you have 30 minutes and a cup of coffee.

Building an AI Culture

This week, Ramp's Chief Product Officer, Geoff Charles, published a brilliant piece on how Ramp have sheep-dipped the whole company in AI. It's a fascinating look behind the curtain at one of the fastest-growing companies in the world (reportedly over 1,000 employees). Here are the highlights:

Start now. Leadership sets the expectation that AI usage is demanded.

Think of AI proficiency as a learning curve, not a light switch.

Expect rapid obsolescence of what you build. If you're building fast, it doesn't matter if it's superseded in three months. Just build.

Use a hub-and-spoke model — a small central team builds the AI plumbing (managing connectors, platforms, upskilling, etc). The spokes are the functional teams that build on top of those platforms.

Create a stage to celebrate and share AI wins. And make it competitive.

Get everyone to an "aha" moment as fast as possible

Don’t micromanage it. ROI is irrelevant at the micro level. Focus on the bigger picture.

Towards the end of the piece, Charles acknowledges that Ramp has "better starting conditions" than most organizations.

In the case of Ramp, that statement is doing an Atlas-like level of heavy lifting. They have an organization packed top to bottom with A+ builders.

There are key enabling components (like building the ‘hub’) that are straightforward for a business with a world-class engineering and building capability like Ramp, but feel like a brick wall for most.

Interestingly, the hub-and-spoke model is another way to describe what we saw from Walmart in last week’s piece. A central infrastructure layer that makes fast, safe deployment possible for a decentralized org building on top of it.

So, understanding how to build that central AI ‘harness’ and making it safe for others to build, looks to be what the best are doing.

The elephant in the room

I've spoken to a lot of CFOs about their biggest barriers to mass AI adoption across the finance team. The answers almost always come down to two things:

Data security concerns (we'll cover that in depth in Post 3)

The risk of AI hallucinations

Today I'm going to focus on hallucinations. What they actually are, why they happen, and how to reduce the risk to something you can manage.

The most AI-resistant CFOs I've spoken to all give me some variation of this story: "I told ChatGPT my sales were 100 and my COGS were 40. I asked what my gross margin was, and it told me: Jeff Goldblum. Wake me up when there's something serious to talk about."

Unsolicited Jeff pic

For a while, I was cynical like this, too.

But a few realities:

The models are improving at a rate that makes last year's opinion obsolete. If you formed this view based on a prompt 12 months ago, you may as well be talking about the Stone Age. It probably wouldn't make the same mistake today.

That is not the kind of problem you need AI to solve. A calculator costs $10. AI is for synthesis, judgment, and pattern recognition across complexity, not basic math. Asking AI to do basic arithmetic is like hiring a Formula 1 driver to do the school run and complaining when they drive the car into the wall.

The real power of AI for a finance team is in how you use its natural language interface to connect the right tool to the right problem in plain English.

And to do that, we need to understand the limits of AI better…

What actually is an AI hallucination?

A hallucination is when an AI model produces an output that is confidently wrong.

Not a calculation error, nor a typo. The model presents something false… a made-up statistic, a fabricated case, a number that doesn't exist… with exactly the same tone and confidence as a know-it-all teenage boy. Shout out and support to you if you are a fellow parent of teenagers. My thoughts are with you during this difficult time.

This is a big problem for precision sports like finance. We are expected to be guardians of the truth, so letting an AI model loose on our finance function that ‘confidently lies’ feels like having a rat in the kitchen.

And if you (like I) have ever had sign controls attestations, CFO letters or other similar reps in a business where good control is a ‘work-in-progress’ - and the personal liability risk that comes with that - you’ll understand why the thought of a hallucinogenic force anywhere in the finance function is intimidating.

The folk telling you to vibecode your way to a new finance function just have no concept of that.

Nonetheless, the answer (as I said last week) is not to hide, but to face into the fire and figure it out…

Why do AI models hallucinate?

I won't get too technical (good cop-out for someone who is definitely not clever enough to be technical), but the short answer is this: AI models are just very good probabilistic guessing programs.

They resolve your prompt through a giant math problem that predicts the most likely next word based on everything that came before it. They are, at their core, pattern recognition and completion engines.

The problem is that almost all nuts-and-bolts accounting and finance work (the stuff we're being told to nuke with AI) is deterministic. It doesn't need to be guessed. It can be calculated precisely or resolved directly from the inputs. There is a right answer, and it isn't probable. It just is.

Now humans make mistakes all the time. You’ve probably seen that stat that says something like 97% of spreadsheets have some kind of material error in them. But humans make dumb mistakes, not smart ones. And they show their work in a formulaic, auditable way. Or they don’t, and it’s easy to spot and distrust.

But AI is a smart black box. It makes clever mistakes that sound right. In the dark. And that presents a new kind of challenge entirely: auditability.

That tension is exactly what the opening experiment exposed. In the first scenario, the model worked probabilistically the whole way through. One wrong step in the reasoning chain and the final answer would have spiraled in the wrong direction, and you'd never know unless you checked it manually.

In the second scenario, the only probabilistic move was deciding to open the calculator (a deterministic tool) and deciding what numbers to punch in - it’s far less likely to blunder a copy-paste. This becomes a much lower-risk way of solving the problem. Once the model has called on the calculator, it is working within a far smaller and more constrained set of options.

So here's the real exam question for CFOs: when you strip back the hype, how much of what your finance team needs is genuine AI reasoning? And how much is just really good automation with a natural language interface on top?

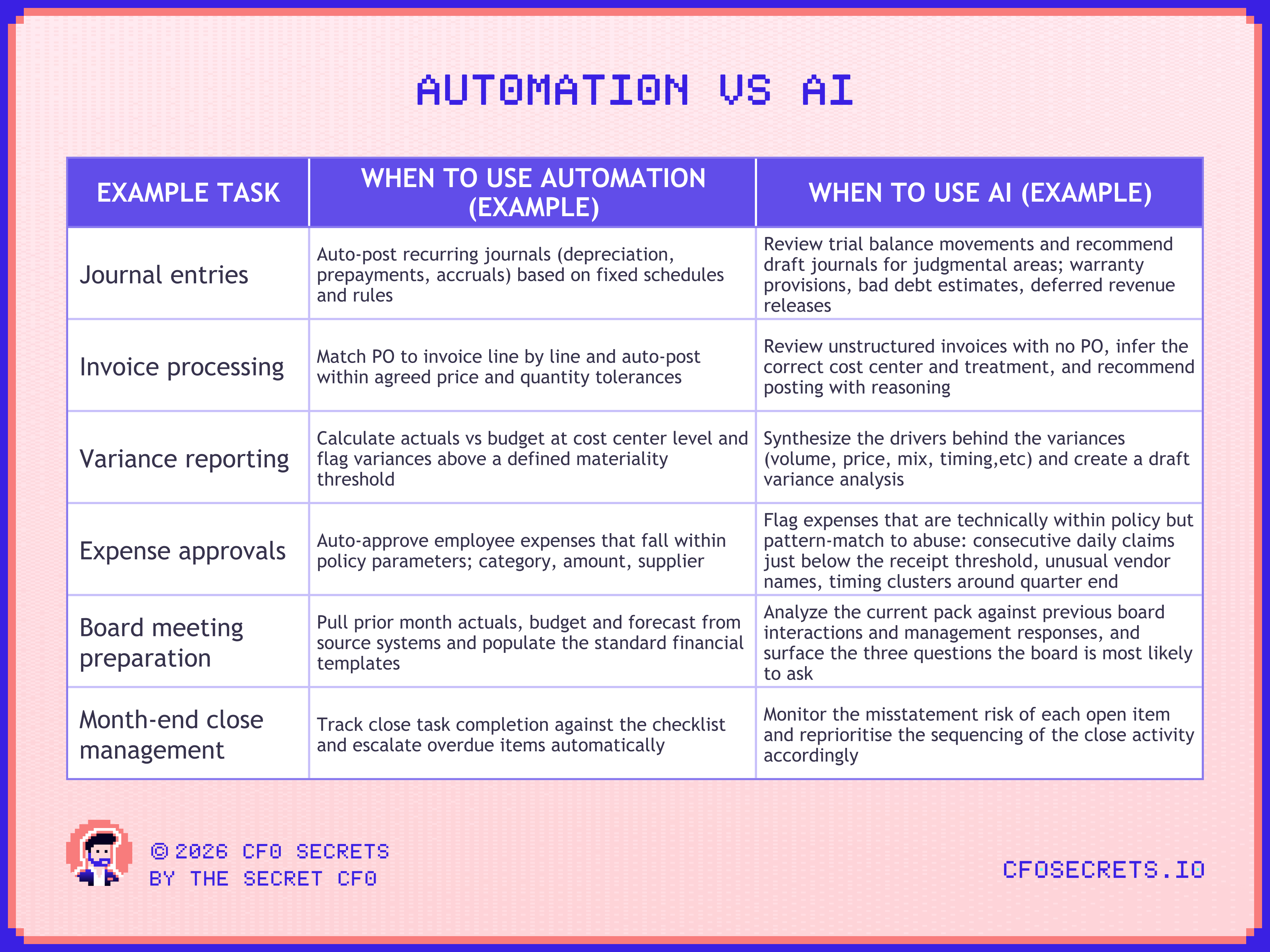

Automation vs. Artificial Intelligence

Let's be clear on the distinction between automation and AI, because they get used interchangeably. They are not the same thing.

Automation: a machine following a fixed set of rules you wrote, executing the same steps the same way every time.

AI: a model that reasons through a problem using context and works out what to do next without being explicitly told.

The line started blurring about ten years ago when automation products began incorporating ‘machine learning’ (a kind of Betamax version of AI). The models would (very slowly) learn your specific invoice quirks and process patterns over time.

And it blurs even further now because you can use AI (Claude Code, for example) to build rules-based automation tools yourself. So the distinction between AI and automation might seem a little meta, but its still important …

There is a massive difference between using AI to build an automation to handle your work and using AI to handle your work directly.

Using AI to build deterministic tools is a much more reassuring way for a jumpy CFO to think about AI. This is not about feeding your whole ledger into a model and hoping it burps back the right answer… (sorry LinkedIn gurus).

Let’s explore that dividing line between the two further.

Note: these examples skew towards the accounting end of the finance function. Not because that's where all the value is, but because it's the right place to start. Good things automatically happen in FP&A when you do good things in accounting. The reverse isn't as true.

The golden rule in finance: default to deterministic wherever the work allows it. Use automation to handle the cycle, and reserve AI reasoning for the moments that need judgment, inference, or a human in the loop. And AI itself has made it easier than ever to build tools that do exactly that.

We'll get deeper into how this shapes your technology choices next week. For now, let's return to AI reasoning, and specifically, where it gets its information from.

Where AI gets its training data

You can think about the data AI models are trained on in four buckets:

Publicly available information: the freely available internet at large

Licensed datasets: higher quality, deeper, paywalled sources (books, academic papers, news archives)

Human-generated feedback: experts hired to rate, rank, and improve model responses. This is increasingly supplemented by synthetic data (AI generating its own training material), but human judgment still anchors the quality layer

User interactions: consumer-tier conversations fed back into training. Enterprise contracts normally explicitly exclude this, which matters for how you think about data privacy.

This explains why Claude would do a solid job drafting a Fixed Asset Accounting policy. The accounting standards and GAAP manuals are freely available in the public domain. As are the depreciation policies for every public company on the planet. You can feed in your historical policy and a ledger extract for context and get something genuinely useful back.

It also explains why it would do a poor job on a transfer pricing policy and would default to the tax and legal line. Anyone who has actually built a transfer pricing policy change knows the real work isn't in the tax rules. It's in understanding how the policy changes internal incentives inside the business (there is a whole module based on my personal experience of this in SimCFO). And toeing that line alongside tax compliance. That's subtle, political, and highly specific to the company. The model simply doesn't have useful training data on this.

Bucket 3 is where it gets interesting. There is now a growing industry of specialist data providers hiring CFOs, controllers, and finance operators and licensing their annotated, real-world decision data to the foundational model providers. Think anonymized FP&A commentary, management judgment calls, and board paper narratives. The kind of nuanced finance reasoning that has never appeared on the public internet. As this data gets built and licensed into the models, their ability to reason correctly on inside-baseball finance problems will improve significantly.

The compound effect of this on reasoning quality (and therefore hallucination rate) over time will be significant.

Prompting your way to glory

There are endless prompt libraries appearing online. Some are useful. Most are not.

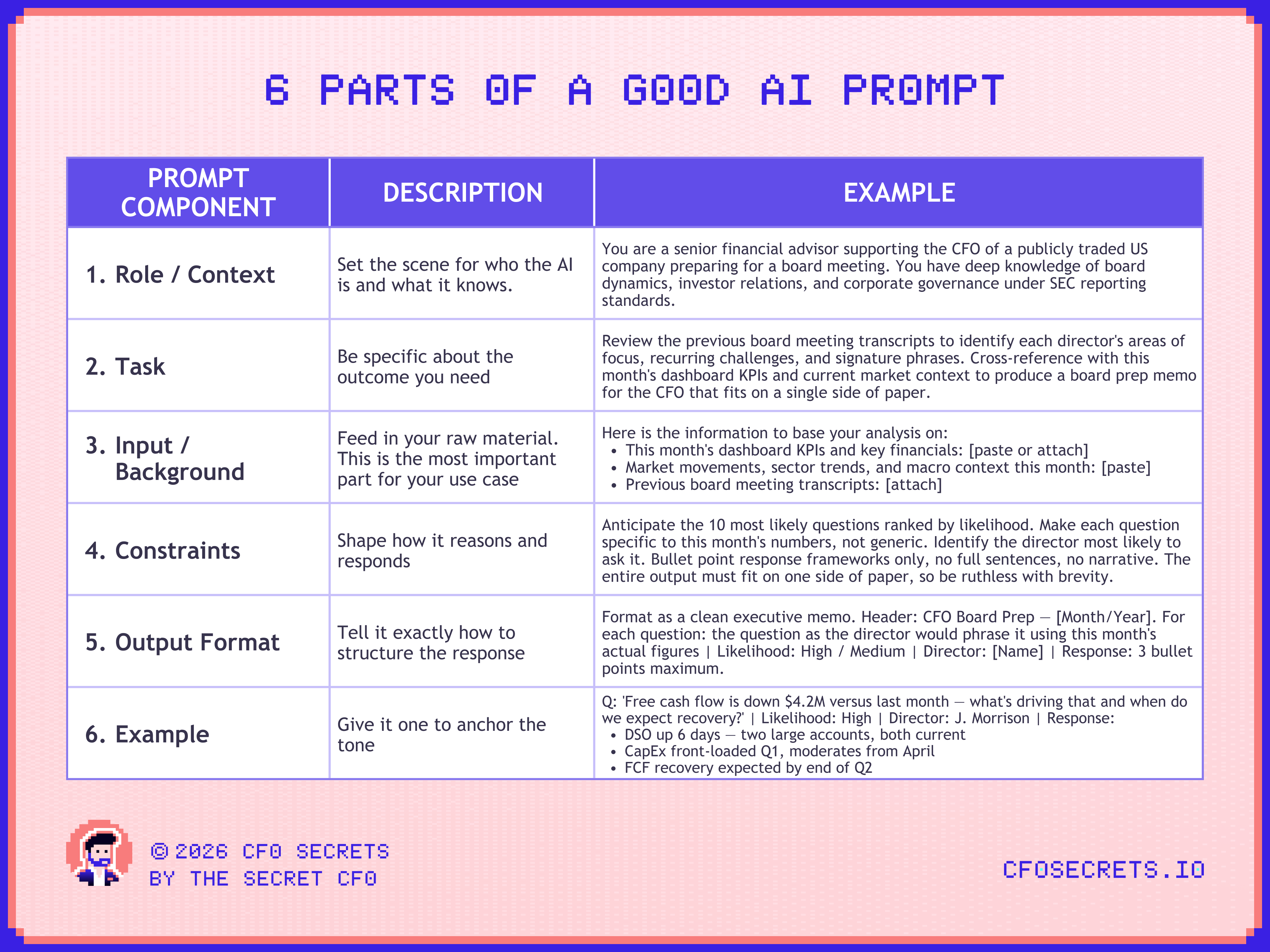

I think it's far more important to understand what makes a good prompt from first principles than to have a library. Having a massive prompt library is like having a fish. Understanding prompting is learning to fish. And you don’t need to just know how to fish; you need to make sure your team knows how to fish.

Important: If your team doesn't understand why a prompt works, they can't adapt it when the context changes, the workflow shifts, or when they spot that the risk is higher that the model will make a mistake.

A good prompt is much like giving an instruction to an employee. It must be precise, complete, and clear.

Specifically, it needs 6 things:

But the key question for the CFO is not… “How do I write a better prompt?” It is “How do I embed quality AI prompting at scale across my team?” And that means putting some guardrails in place.

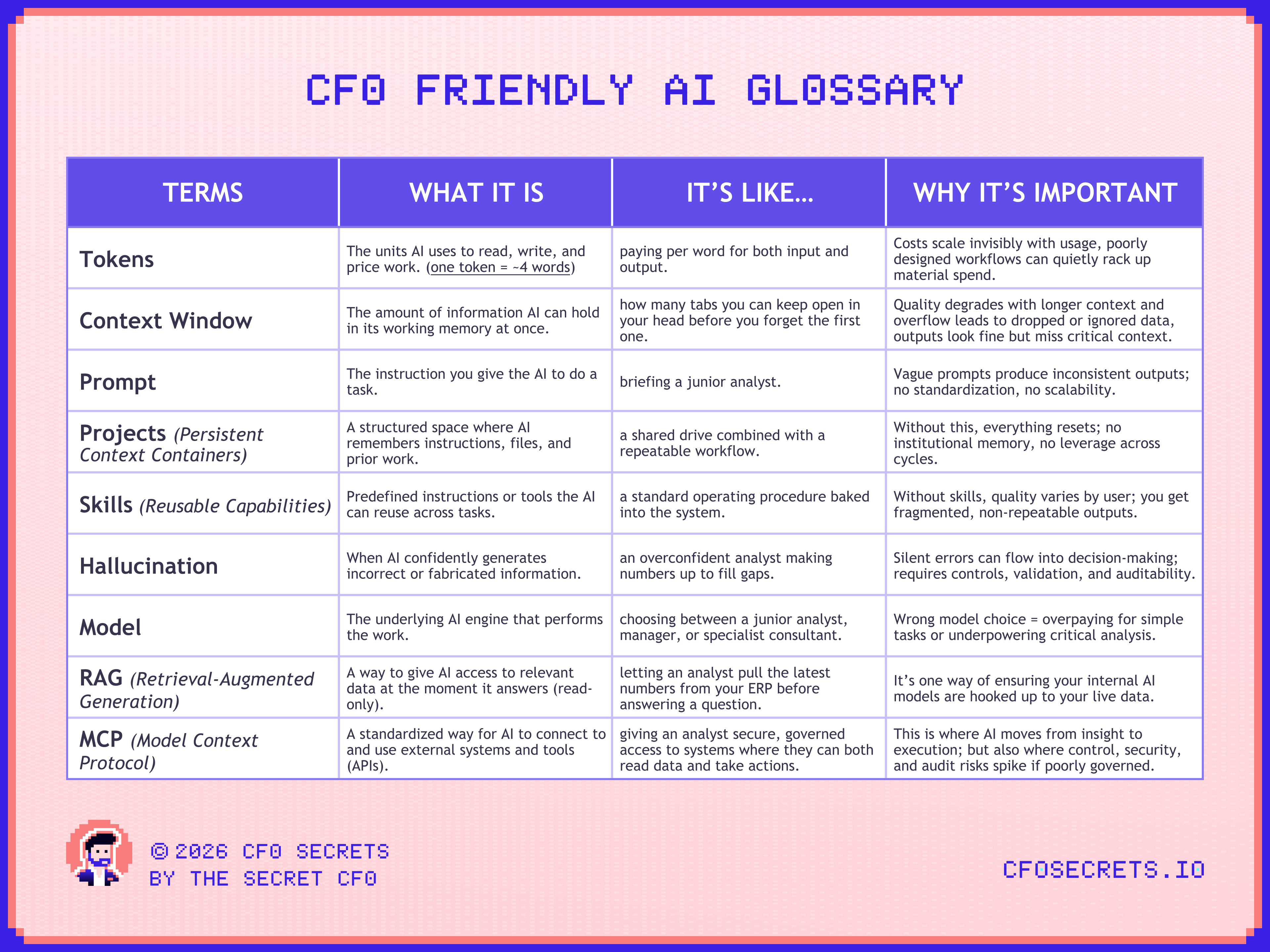

But to get into those guardrails, we are going to need to get familiar with some AI terminology. So, here’s a quick CFO-friendly glossary of some key terms:

Precision at Scale: Projects and Skills

A common failure mode in AI use is over-prompting, lengthy, repetitive instructions that assume more context equals better output.

So how do you put guardrails around that?

In practice, verbose prompts slow down processing, consume tokens unnecessarily, and make it more likely you get a bad answer...

And the fear from the CFO seat is that it gets harder to control the more you let people loose with AI.

Two architectural features directly address this: Projects and Skills.

Projects solve the problem of repeating yourself. Instead of restating the same background information in every prompt, you define it once in a Project and it is available whenever a relevant routine calls on it. Think of it as a shared reference library that sits behind your prompts rather than inside them.

Skills do the same for how tasks are executed. A skill defines not just what a routine should do, but exactly how: the logic, sequencing, tone, and output format. You can think of them like job descriptions for AI agents.

The combined effect is significant. Projects remove variability in what the model knows. Skills remove variability in what they do. For a finance team running high-volume, time-sensitive workflows, that consistency is what makes AI operationally trustworthy rather than a tool that needs babysitting every time someone new uses it.

This also has a direct bearing on how you think about your broader strategy with AI adoption in finance.

If you have the discipline and expertise to build the architecture Ramp has: a governed harness that controls not just context and skills, but the models you use, the workflows they power, the data they can access, and the permissions that determine who can do what, you can give your teams genuine autonomy. The guardrail is in the hub… the freedom is in the spokes.

Not every organization will have the confidence to build something so expansive. The alternative (in finance) is to let that expertise live inside specialist software; AI-native finance tools that have already encoded the logic, and where your technology strategy becomes one of selecting and integrating the right platforms… rather than constructing the infrastructure from scratch.

Neither approach is wrong. But they are meaningfully different bets, and the CFO is increasingly the person who has to make them.

We are going to get into how to make those decisions next week.

In the meantime, if you haven't experimented with AI on a finance workflow yet, here is a little homework exercise to help you get building and think in an AI-friendly way.

Homework: Build Your First Governed Workflow

Pick one finance process that touches more than one person, ideally something near the end of the chain rather than in the guts of the system. I tried it for a bank covenant compliance review.

Now we’ll break it down.

Step 1. Break the process into atomic units. For covenant compliance, I ended up with four: extract the relevant metrics from the source, calculate headroom against each threshold, flag positions that are tight or breached, and draft the lender narrative.

Step 2. Answer these three questions for each unit

What does it need to know? The context, definitions, thresholds, and prior data it relies on. This is your Project.

What does it need to do? The logic, sequencing, and output format. These are the ‘Skills’

Rule or judgment? Can this be resolved deterministically, or does it require inference? The first gets automated. The second is where AI earns its place.

You'll end up with a simple map: what context lives where, what each Skill does, and where the human stays in the loop.

Step 3. Pass it to Claude and build it. Feed your map back into a prompt along with these instructions and the process you've chosen. Ask Claude to walk you through how to build it.

Remember, you haven't passed in any real data yet, so there's nothing to worry about on that front. This is an experiment. And if you don’t have Claude, just get it and subscribe to Claude Pro; $20 for one month. A small price for what you’ll learn.

At a minimum, set up a Claude Project. Load in the relevant context, policy documents, definitions, prior outputs, and wire up the Skills you've defined. If you're feeling adventurous, take it into Claude Code and turn it into a small, governed workflow. If you get stuck - just keep a thread to have Claude coach you through the process step by step.

If you build something, I’d love to see it. Share it with me by just replying to this email…

Remember, you haven't pushed any sensitive data through this yet.

That conversation comes next week, when we get into the system architecture and the environment in which all of this lives…

If you’re looking to sponsor CFO Secrets Newsletter fill out this form and we’ll be in touch.

:::::::::::::::::::::::::::::::::::::::::::::::::::

:: Thank you to our sponsor ::

:: Campfire ::

:::::::::::::::::::::::::::::::::::::::::::::::::::

What did you think of this week’s edition?

If you enjoyed today’s content, don’t forget to subscribe.

Disclaimer: I am not your accountant, tax advisor, lawyer, CFO, director, or friend. Well, maybe I’m your friend, but I am not any of those other things. Everything I publish represents my opinions only, not advice. Running the finances for a company is serious business, and you should take the proper advice you need.